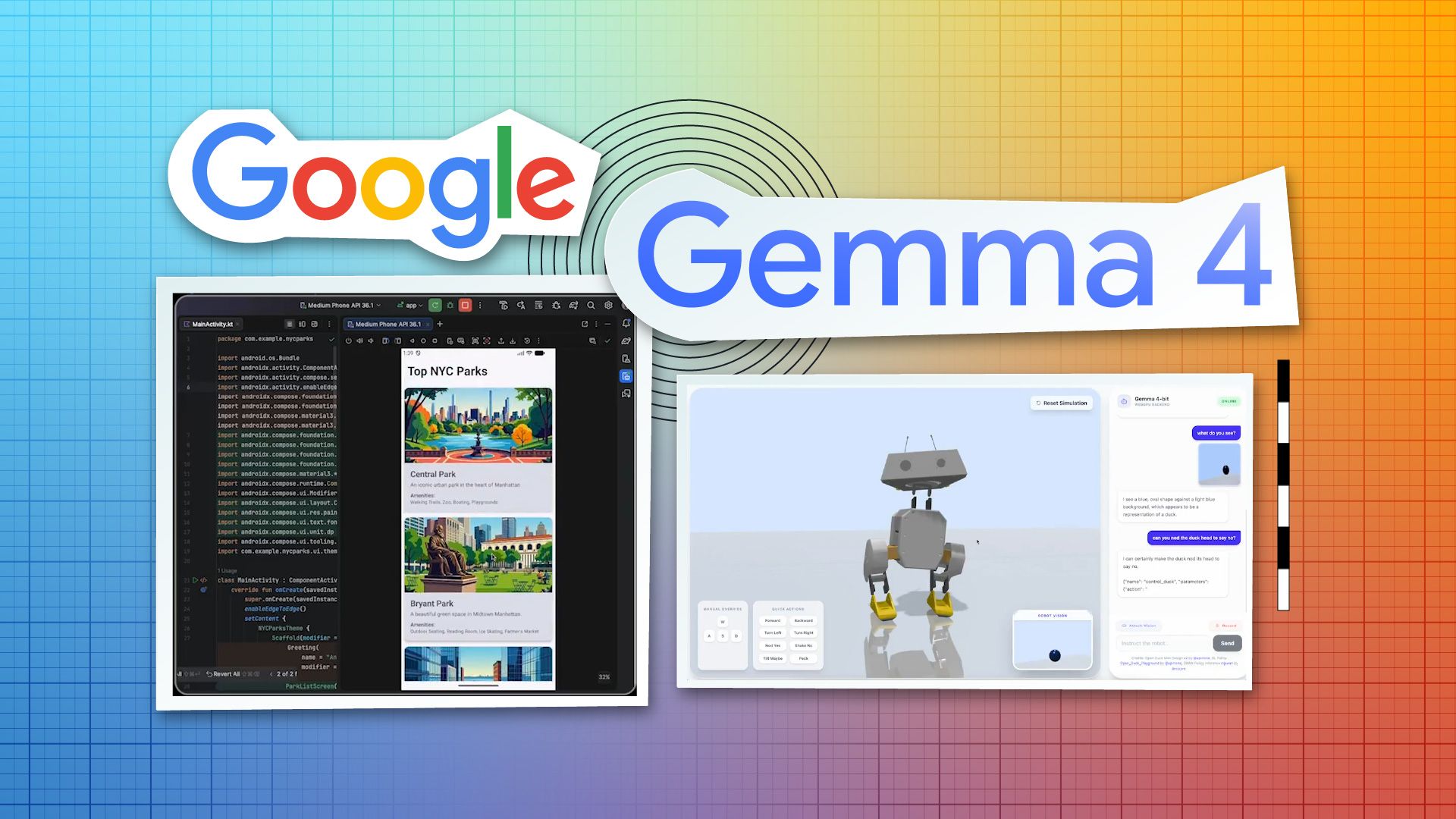

Google has released Gemma 4, calling it "our most intelligent open models to date." Built from the same research behind Gemini 3, Gemma 4 brings flagship-level reasoning to local hardware. Shipped under an Apache 2.0 license, it represents a major step forward for highly capable models that you can actually run on your own machine without relying on cloud APIs or paying per-token fees.

What Apache 2.0 Actually Means Here

Open-weight AI models come with a range of licensing terms, and the differences are not academic. Meta's Llama models carry custom licenses that restrict commercial use above certain user thresholds. Mistral has mixed its licensing across releases. Google choosing Apache 2.0 for Gemma 4 removes the ambiguity entirely.

For companies evaluating whether to build on open models or continue paying for cloud API access, this is the relevant detail. A model you can run on your own hardware, fine-tune on your own data, and integrate into your own products without a licensing conversation is a fundamentally different asset than one gated behind usage terms that might change.

Built From Gemini 3 Research

According to the Google Developers Blog announcement, Gemma 4 is purpose-built for "advanced reasoning and agentic workflows" — multi-step tasks, tool use, and structured output. Previous Gemma releases tracked their corresponding Gemini generations but with meaningful capability gaps. The question with each release is always how much of the parent model's capability actually transfers down.

Independent benchmarks will determine whether the reasoning capabilities are genuinely competitive with Gemini 3 or represent a diluted version. History suggests the latter, but the gap has been closing with each generation.

The Case for Running Locally

Apache 2.0 means Gemma 4 can run entirely on your own infrastructure, from edge devices to local workstations. Organizations with data privacy requirements can fine-tune on proprietary data and deploy internally without sending anything to external endpoints. But beyond privacy, having this level of reasoning natively on local hardware changes the cost equation. The structure flips from variable API pricing that grows with every call to fixed hardware costs that decrease per-query as usage scales.

For developers evaluating local models, the combination of Apache 2.0 licensing and Gemini 3 lineage makes Gemma 4 one of the most compelling local options currently available.

The Competitive Picture

Google is not releasing Gemma 4 out of generosity. Open models drive adoption of Google's broader AI ecosystem — cloud infrastructure, development tools, enterprise services. By making Gemma 4 capable and permissively licensed, Google pressures competitors on two fronts: it undercuts closed API providers on price, and it challenges other open-model efforts from Meta, Mistral, and the broader open-source community to match both capability and licensing terms.

This also puts pressure on companies charging for API access to models at similar capability levels. If a freely deployable model handles 80% of what a paid API does, the paid API needs to justify its premium with the remaining 20% or with convenience and support that self-hosting cannot match.

What to Watch

Model size variants, hardware requirements, and practical fine-tuning costs will determine who can actually run Gemma 4 effectively. A model that requires enterprise-grade GPU clusters is a different proposition than one that runs on a single workstation. But the licensing is clear, the lineage is strong, and the trajectory of open models continues pointing in one direction: more capability, fewer restrictions, and less reason to default to paying for cloud AI when you can own the stack yourself.