A new open-source method called Seen2Scene can now complete partial 3D scans into full, coherent scenes. The system addresses a fundamental constraint in 3D digitization: photogrammetry, LiDAR, and RGB-D captures always leave holes behind furniture, under tables, or in tight corners. Seen2Scene fills those gaps, and it does so by training directly on messy, incomplete real-world scans rather than relying on perfect synthetic data.

Why Real Data Matters

Prior approaches to 3D scene completion faced a paradox. To train a model to fill in missing geometry, you need complete ground-truth scenes for supervision. But complete real-world scans do not exist. So researchers turned to synthetic datasets like 3D-FRONT, collections of clean, computer-generated rooms that provide perfect geometry. The problem is obvious: models trained on tidy synthetic bedrooms struggle with the clutter, irregular layouts, and varied geometry of actual scanned spaces. The domain gap between synthetic training data and real-world inference has been a persistent bottleneck.

Seen2Scene breaks this cycle. The method introduces visibility-guided flow matching, a training strategy that only supervises the model on regions the scanner actually observed. Unknown areas are explicitly masked out during training rather than filled in with synthetic stand-ins. This lets the generative model learn realistic geometry distributions from partial real data without ever requiring a complete ground-truth scene. The full technical details are described in the paper on arXiv.

How It Works

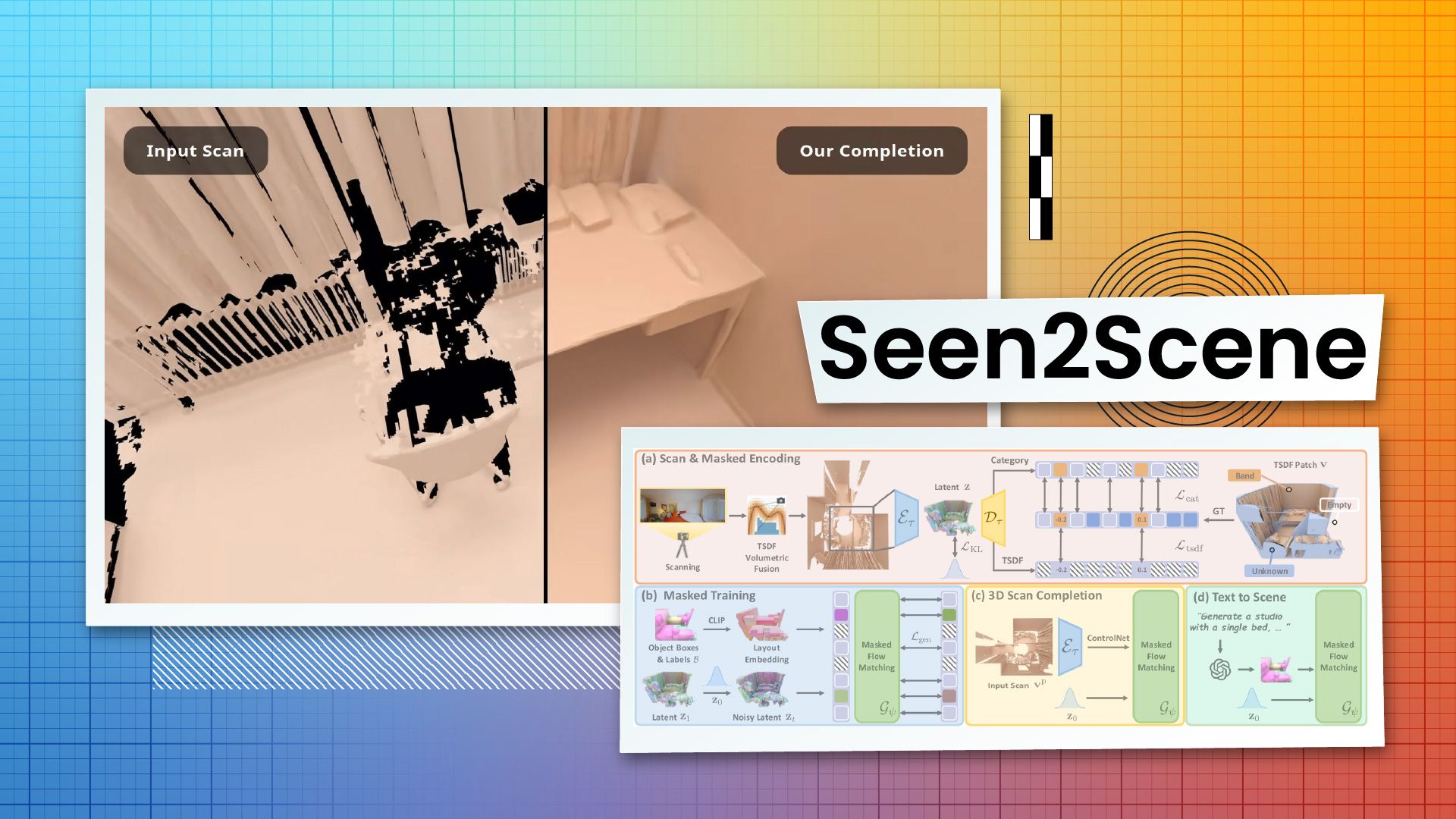

The technical pipeline operates on truncated signed distance fields (TSDFs) stored in sparse voxel grids. A masked sparse variational autoencoder encodes partial scans into a latent representation, and a sparse transformer models the complex spatial relationships within the scene. The visibility mask is the key mechanism: during flow matching (a generative modeling technique related to diffusion models), the loss function ignores unobserved voxels entirely. The model learns to generate plausible completions for those regions without being penalized for not matching data that was never captured.

Conditioning works through 3D layout boxes that define the spatial arrangement of a scene. These boxes can come from a partial scan (for completion tasks) or from a text prompt routed through an LLM that translates natural language into spatial layouts (for generation tasks). A ControlNet-style fine-tuning mechanism bridges the two use cases, letting the same base model handle both scan completion and text-to-3D generation. The implementation is available on GitHub.

Results on Real Scans

The team evaluated Seen2Scene on ScanNet++ and ARKitScenes, two datasets of actual 3D-scanned indoor environments. Compared against baselines including SG-NN and NKSR, the method produces more geometrically complete and surface-accurate results, particularly in cluttered, complex rooms where prior methods falter. The outputs look like plausible real spaces, not the overly smooth, furniture-catalog aesthetic of synthetic-data-trained models.

The system's outputs are currently voxel-based geometry rather than production-ready textured meshes, meaning integration into standard DCC tools would require additional processing. But as a foundation for turning partial scans into complete spatial data, it addresses a costly gap in the capture pipeline. The research comes from a team at the Technical University of Munich and the University of Virginia. The full paper and code repository are available publicly.