Voice dictation startup Willow has launched Atlas 1, a new speech-to-text model it calls a "frontier" system for real-time transcription. The YC-backed company claims the model outperforms major providers like ElevenLabs, Deepgram, and OpenAI "by a wide margin." If those internal benchmarks hold up under independent scrutiny, Atlas 1 represents a meaningful shift in the baseline accuracy of real-time dictation tools.

The "Human-Powered Infrastructure" Claim

The most unusual part of the announcement is Willow's description of Atlas 1 as being built on "the first scalable, human-powered transcription infrastructure ever built for real-time dictation." The company has not published technical details about what this means in practice. The phrasing suggests a data pipeline where human transcribers generate or validate training data specifically tuned for dictation use cases, rather than relying on the web-scraped audio datasets that underpin most large STT models.

If that interpretation is correct, the approach has precedent in narrow domains. Medical transcription services have long used human-in-the-loop systems to maintain accuracy on specialized vocabulary. What would be new is applying that methodology at scale to general-purpose dictation and building a model on top of the resulting dataset.

The question is whether this approach can maintain its accuracy advantage as it scales across domains, accents, and recording conditions. A model trained on high-quality dictation data may perform exceptionally well on clean, single-speaker input while struggling with messy or overlapping audio.

How the Benchmarks Stack Up

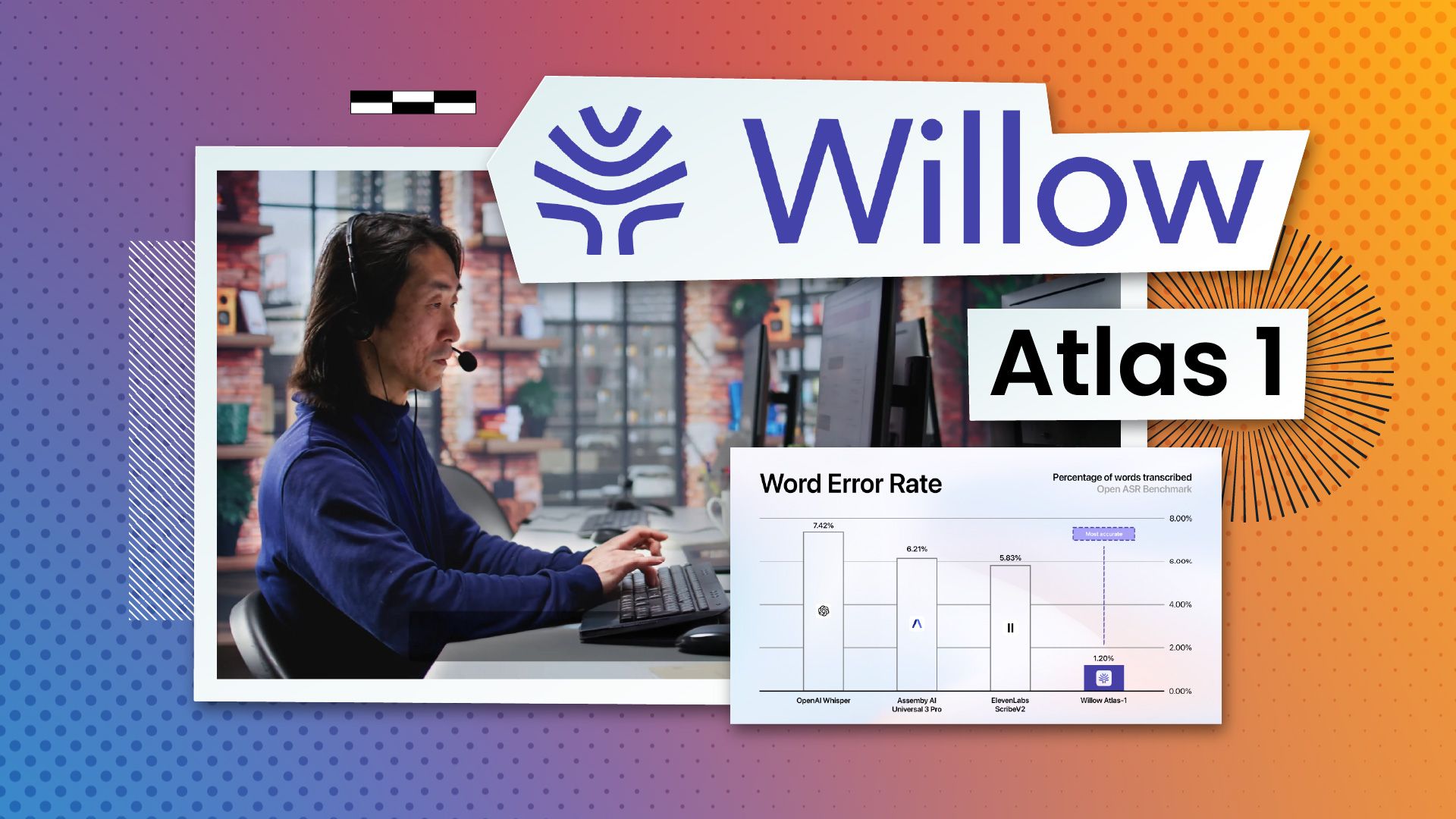

The speech-to-text market has consolidated around a few dominant players. ElevenLabs currently leads the Artificial Analysis leaderboard at roughly a 2.3% word error rate (WER). OpenAI and Deepgram both sit under 5% WER on clean audio.

Willow has not published detailed benchmark methodology alongside the Atlas 1 announcement. The claim of outperforming those providers "by a wide margin" is bold given the current state of the field. Top-tier models already cluster within a few percentage points of each other on standard evaluation sets. Outperforming them by a wide margin would mean either a significant WER reduction on established benchmarks or strong performance on a new, more demanding test set.

Until Willow releases its benchmark data and allows third-party evaluation, the claim sits in a familiar category: impressive if verified, premature if not. The STT space has seen enough vendor-run benchmarks with favorable test conditions to warrant skepticism toward any self-reported leaderboard result. Independent validators like AssemblyAI and Speechmatics will provide a clearer picture once they test the model.

Willow raised $4.2 million and reports adoption across Fortune 500 companies for its existing dictation product. Atlas 1 is the company's play to move from a productivity tool to core infrastructure. Whether it succeeds depends on whether the accuracy claims survive contact with independent testing.