In this week's episode of Denoised, Addy and Joey lay out a roadmap of expectations for 2026, arranging forecasts into three buckets: super confident, very likely, and long shots. Their conversation moves from practical tool predictions that affect day-to-day workflows to industry-wide shifts that could reshape production pipelines.

Super confident bets — tools that will land in 2026

Comfy UI ends up in the hands of a major player

Joey and Addy agree that ComfyUI’s rapid adoption by hobbyists and pros has made it a visible acquisition target. Potential suitors include large cloud providers and established VFX vendors — companies that can scale Comfy's cloud play while preserving its open community. The hosts stress one condition: whichever buyer wins should nurture the ecosystem of custom nodes and plugins, not bury the open foundations.

Real-time video generation becomes usable in the cloud

Both hosts expect a genuinely real-time video model to appear in 2026 — cloud-first rather than local. This would not be simple frame-by-frame inference; it would feel like interacting with a live feed, where changes appear instantly and can be driven by camera input or director controls.

A practical VFX model that keeps source quality

The hosts describe a VFX-focused model that can take high-resolution camera plates, produce PBR maps, remove wires, relight scenes, swap backgrounds and then return non-destructive outputs at original quality — not compressed eight-bit artifacts. Tools like Beeble and Luma are seen as early steps; the prediction is a productized VFX model that integrates into editorial and finishing workflows.

Control beyond text prompts

Expect more interfaces for directing generative output: virtual camera rigs, joysticks, motion tracks and camera-parameter uploads. This is already visible in some tools that accept camera movement or motion data to guide renders. The hosts want real-time control and richer input modalities beyond typed prompts.

Photoreal image models without uncanny valley

One confident call: image models will reach consistent photorealism, producing people and scenes that are visually indistinguishable from real photography. The hosts argue we’re close now and that 2026 could be the year this becomes routine.

Very likely — shifts that will influence strategy and budgets

Resurgence of handcrafted craft

As AI-generated material floods feeds, the hosts predict a counter-movement: renewed interest in tangible, handcrafted media — stop-motion, hand-drawn 2D, film formats, collector editions and physical releases. This is a cultural and market reaction, not a technological one.

Nvidia and a market recalibration

With massive valuations tied to AI compute, the hosts predict a market correction that reflects the reality that AGI is still years away. This could affect investment flows into chip suppliers and cloud compute providers.

Legal clarity on model training

Ongoing litigation around what data models can be trained on looks set to move toward clearer rulings or settlements. The discussion will shift from training legality to how outputs are used and whether they infringe IP.

A non-US model that runs locally

Expect a surprise entrant from outside the U.S. that offers high-quality image and video models optimized for local execution, compact and Comfy-friendly. This would echo past moments when non-US models disrupted assumptions about who controls core tooling.

AI-assisted animated features arrive

The hosts call an AI-heavy animated feature likely within the next year — one that leans on AI for inbetween frames, style passes, and massive cost-time reduction, and that could appear at a major festival or streamer.

New paradigm for persistent AI-generated worlds

Following demos of world models, expect usable, persistent generative spaces where a virtual camera can move and capture shots. Current demos are short-lived; the shift will be toward duration, state persistence and higher fidelity.

Long shots — ambitious changes worth watching

90-minute live-actiony cinema from AI studios

Several AI-first studios are attempting full-length projects. The hosts label a true live-action feature driven by generative tech as a long shot for 2026: possible, but still constrained by uncanny valley and performance synthesis limits.

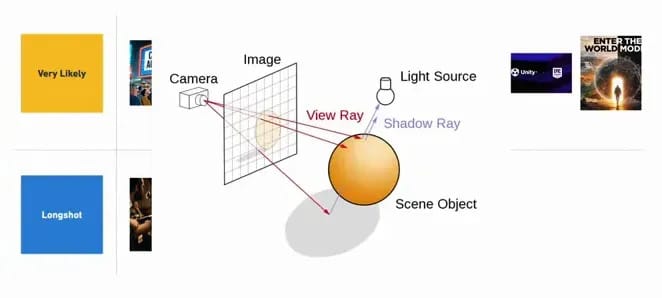

Neural rendering integrated into major DCC tools

The idea: replace or augment ray-traced rendering with neural render passes inside Maya, Blender, Houdini or Unreal. This is a technical and product effort that could land if compute and quality economics improve.

Licensed AI music ecosystems

Partnerships between music licensors and AI platforms could mainstream licensed AI music. If labels cooperate, training datasets become licensed and “ethical” offerings expand. Expect ambient and background music to be largely AI-generated, while marquee artists remain human-driven.

Closing thoughts

The hosts distilled a practical, production-first view of the year ahead: incremental, impactful tool releases will change how shots are planned, how VFX is executed and how animation budgets are allocated. Major structural shifts — AGI, full neural replacement of render engines, and studio-level live-action features driven end-to-end by generative models — remain less likely in 12 months but are plausible longer-term bets.

The next milestone is simple: test the new tools in small, production-representative pilots and update pipeline docs as capabilities and vendor terms evolve. 2026 will be about integrating practical AI into established production rhythms, not about overnight reinvention.