Welcome to VP Land! Project Hail Mary just became the first film to premiere in the stratosphere, with a custom IMAX display sent to 110,000 feet. Meanwhile back on Earth, we went to the California-Arizona border with an iPhone.

In today's edition:

We tried to recreate a Hollywood car chase on iPhone — here's where the workflow broke down

Val Kilmer appears in a new film via generative AI, five years after his death

Google launches two tools to let anyone build and design apps without coding

Handshake AI is paying improv actors $74/hour to train AI on human emotion

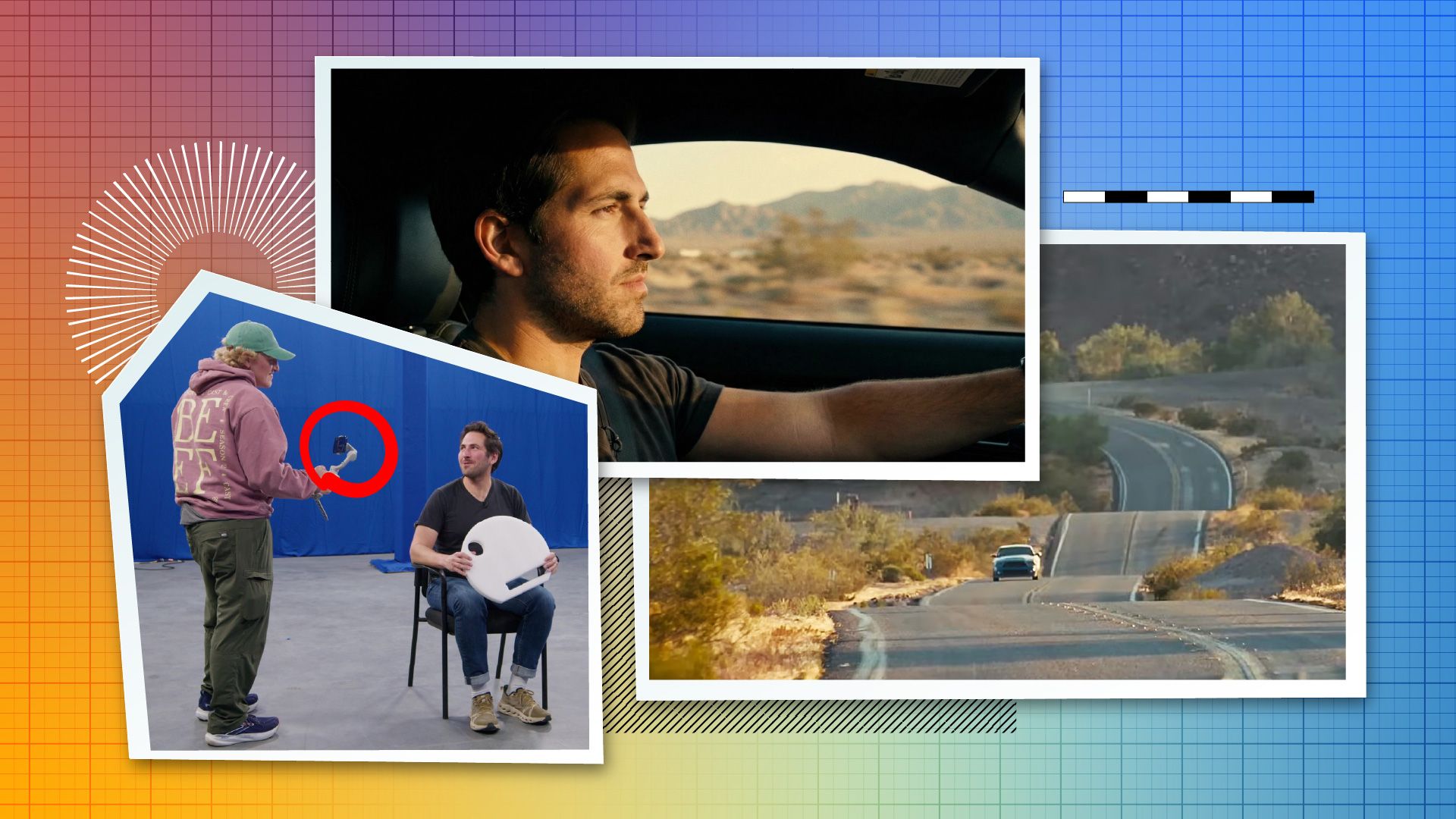

We tried to shoot the One Battle car chase with an iPhone. Here's what we learned about the future of hybrid AI filmmaking.

We ran an experiment to see how far we could push an iPhone to recreate the car chase from One Battle After Another. The goal, as Conrad Curtis at VPX Lab framed it: poor man's budget, rich man's production value. Some of it worked. Some of it didn't.

The plan. Drive out to the desert and shoot real driving plates with an Insta360. For the interior, test two approaches: shoot inside a real car with blue screen outside the windows (aka poor man's process), and shoot the actor in an empty space with no car at all, using a LiDAR scan of a real Mustang to create a digital twin we could composite in later.

Where it broke down. The speed-versus-control problem hit immediately. Traditional compositing gave full control but required days of work and expertise. AI video-to-video models were fast but produced warped faces and inconsistent results. On top of that, the iPhone's dynamic range became a hard ceiling: AI relighting tools can't add information that wasn't captured in the original footage, and the footage couldn't hold up under harsh restyling.

Beeble SwitchX collapsed the speed-versus-control tradeoff. Months after the original shoot, a new video-to-video model built specifically for relighting and restyling changed the equation. Shadows landed correctly, reflections worked, and we finally had both speed and usable results.

There's more to the story on what held up, what broke down, and what we'd do differently. Read the full article for the complete breakdown.

SPONSOR MESSAGE

Assistants respond. Viktor ships.

Viktor is an AI coworker with its own computer, running inside your Slack workspace.

It connects to 3,000+ tools and chains real workflows across them. Tell Viktor to pull your Meta Ads spend, cross-reference it against Stripe revenue by cohort, and deploy a live dashboard your team can check every morning. It writes the scripts, handles the auth, and ships a working result.

No prompt engineering. No copying data between tabs. One message in Slack. Done.

Most AI tools return text. Viktor returns something you can send to your board or push to production.

Google launches Stitch and AI Studio

Google launched Stitch and AI Studio vibe coding to remove traditional gatekeeping in app development. Stitch generates UI designs from text or images, exporting to Figma or frontend code. AI Studio is a full-stack environment with Antigravity agent and Firebase integration.

Stitch generates designs without design skills. Input a text description or reference image, and Stitch produces interactive prototypes. Export directly to Figma for refinement or to frontend code for development. The tool handles layout, component selection, and interaction patterns.

AI Studio handles full-stack development. The Antigravity agent manages backend logic, database design, and API integration. Firebase handles hosting and real-time data. You describe what you want to build in plain English, and the agent assembles the workflow.

Designers don't need to code. Developers don't need design skills. Non-technical founders can prototype and launch without hiring specialists. Both tools are available now: Stitch via stitch.withgoogle.com and AI Studio vibe coding via Google AI Studio.

AI and Acting

Two stories show how AI is reshaping performance and acting.

Val Kilmer was cast as Father Fintan in As Deep as the Grave five years before his death, but illness prevented him from shooting. Director Coerte Voorhees used generative AI with the cooperation of Kilmer's estate, combining younger archival images with footage from his final years and using his voice. The film, a true story of Southwestern archaeologists Ann and Earl Morris in Canyon de Chelly, Arizona, features Kilmer in "a significant part" of the finished film. Mercedes Kilmer said her father "always looked at emerging technologies with optimism as a tool to expand the possibilities of storytelling" (according to Variety).

Handshake AI is recruiting improv actors to train AI models on human emotion and tone. The job listing calls for performers who can "recognize, express, and shift between emotions in a way that feels authentic and human." Handshake provides training data to OpenAI and other labs. Demand tripled as AI labs scaled training data demand, and the company surpassed a $150 million run rate in November. The work pays roughly $74 per hour. According to The Verge, many participants worry they're training AI that will eventually replace their careers.

Real-time video, custom brand models, and visual workflow builders: this week in AI tools

Six updates across video generation, image editing, and workflow automation.

Runway real-time video on NVIDIA Vera Rubin. Runway's Gen-4.5 video model was ported from NVIDIA Hopper to Vera Rubin in a single day, demonstrating production readiness for real-time video generation. The Vera Rubin platform delivers 50 petaflops of inference compute per GPU, enabling HD video generation with time-to-first-frame under 100ms. Runway's GWM-1 world model family also runs on Vera Rubin, simulating physics-aware environments for robotics training and interactive avatars.

Photoshop Rotate Object. A new Photoshop beta feature lets you rotate any object in a 2D image. AI Harmonize fills in what was behind it, handling perspective and lighting automatically.

Adobe and NVIDIA partnership for Firefly. Adobe and NVIDIA announced a strategic partnership to develop next-gen Firefly models.

Adobe Firefly Custom Models in public beta. Custom models are now in public beta, allowing you to train Firefly on your own assets. The model learns stroke weight, color palettes, lighting, and character features, generating new images that match your aesthetic. Models are private by default. Users must confirm they have rights to training assets. The tool is consumer-accessible, aimed at brands and creators needing consistent visual style at scale.

ElevenCreative Flows. A node-based canvas for chaining AI models together. Visual workflow builder for composing creative AI tasks.

KREA Node Agent. Describe what you want to build in plain English. The agent assembles the creative AI workflow automatically. Both Flows and the Node Agent are available now in their respective platforms.

A video breakdown tests whether DaVinci Resolve runs on MacBook NEO with 8GB of RAM. The test covers real-world editing workflows, timeline performance, and hardware limits.

Stories, projects, and links that caught our attention from around the web:

🎞️ David Keighley, an IMAX pioneer whose contributions to the format earned tributes from James Cameron, Christopher Nolan, and Denis Villeneuve, was omitted from the Oscars In Memoriam. His son Geoff publicly called out the Academy.

🎬 Peter Diamandis launched a Future Vision XPRIZE backed by Google with $3.5 million to fund optimistic sci-fi films. Five finalists get $100K; the winner receives $2.5M to produce a full feature.

💰 Netflix is reportedly paying up to $600 million for Ben Affleck's AI company InterPositive, which Affleck has been building since 2022 with proprietary training data.

✍️ The WGA will seek payment for members whose scripts are used to train AI models in upcoming AMPTP negotiations, arguing writers should be compensated for derivative uses of their work.

🚫 ByteDance suspended Seedance 2.0 global rollout after cease-and-desist letters from Disney, Paramount, and Netflix, with U.S. senators calling for a full shutdown.

📺 Google AI Futures Fund invested in Animaj, which produces AI-generated content for kids on YouTube with 22 billion views annually. Child safety groups have raised concerns.

We talked to the CEO of ComfyUI about the state of open-source AI video tools, workflow standardization, and where the community is headed. Watch the full conversation on YouTube.

Read the show notes or watch the full episode.

Watch/Listen & Subscribe

👔 Open Job Posts

AI Architect & Technical Strategist - Remote

Machine Learning Engineer, PyTorch - Remote

Cuebric

Director of Virtual Production - Los Angeles, CA

Virtual Production Producer - Seoul, South Korea

Virtual Production Technician - Seoul, South Korea

Eyeline Studios

Unreal Engine Operator - Riyadh, Saudi Arabia

Motion Capture Systems Technician - Riyadh, Saudi Arabia

Pixomondo

AI Video Producer and Editor - Seattle, WA

Amazon (T&C Creative Services)

Sr. Design Technologist, Elevated Shopping - New York, NY / Seattle, WA

Amazon

Mocap TD (Virtual Production Team) - India

DNEG VFX India

Virtual Production Supervisor - Dubai, UAE

Garage Studio

Director of Machine Learning - Remote

Applied Research Lead - Model Scaling - Remote

Runway ML

📆 Upcoming Events

March 24

REDUCATION Los Angeles - RED Digital Cinema

Burbank, CA

April 14

Virtual Production Gathering Industry Day 2026

Breda, Netherlands

April 18

NAB Show 2026

Las Vegas, NV

May 12

MPTS - Media Production & Technology Show 2026

London, UK

May 26

AI on the Lot 2026

Culver City, CA

View the full event calendar and submit your own events here.

Thanks for reading VP Land!

Thanks for reading VP Land!

Have a link to share or a story idea? Send it here.

Interested in reaching media industry professionals? Advertise with us.