We teamed up with VPX Lab to try to recreate the car chase from One Battle After Another using an iPhone. The question: how far can accessible gear and emerging AI tools take you when you stack them together — and where does the workflow break down?

The Goal

Recreate the One Battle After Another car chase — a multi-million dollar Hollywood shoot — using an iPhone, AI relighting tools, and a hybrid compositing workflow. The experiment was a test of hybrid AI filmmaking: how far accessible gear and emerging AI tools can go when you stack them together, and where the workflow breaks down.

We worked with Conrad Curtis at VPX Lab, whose mission is exactly this: figure out how high-end filmmaking workflows can scale down to accessible tools. The framing he kept coming back to: poor man's budget, rich man's production value.

The Workflow We Built

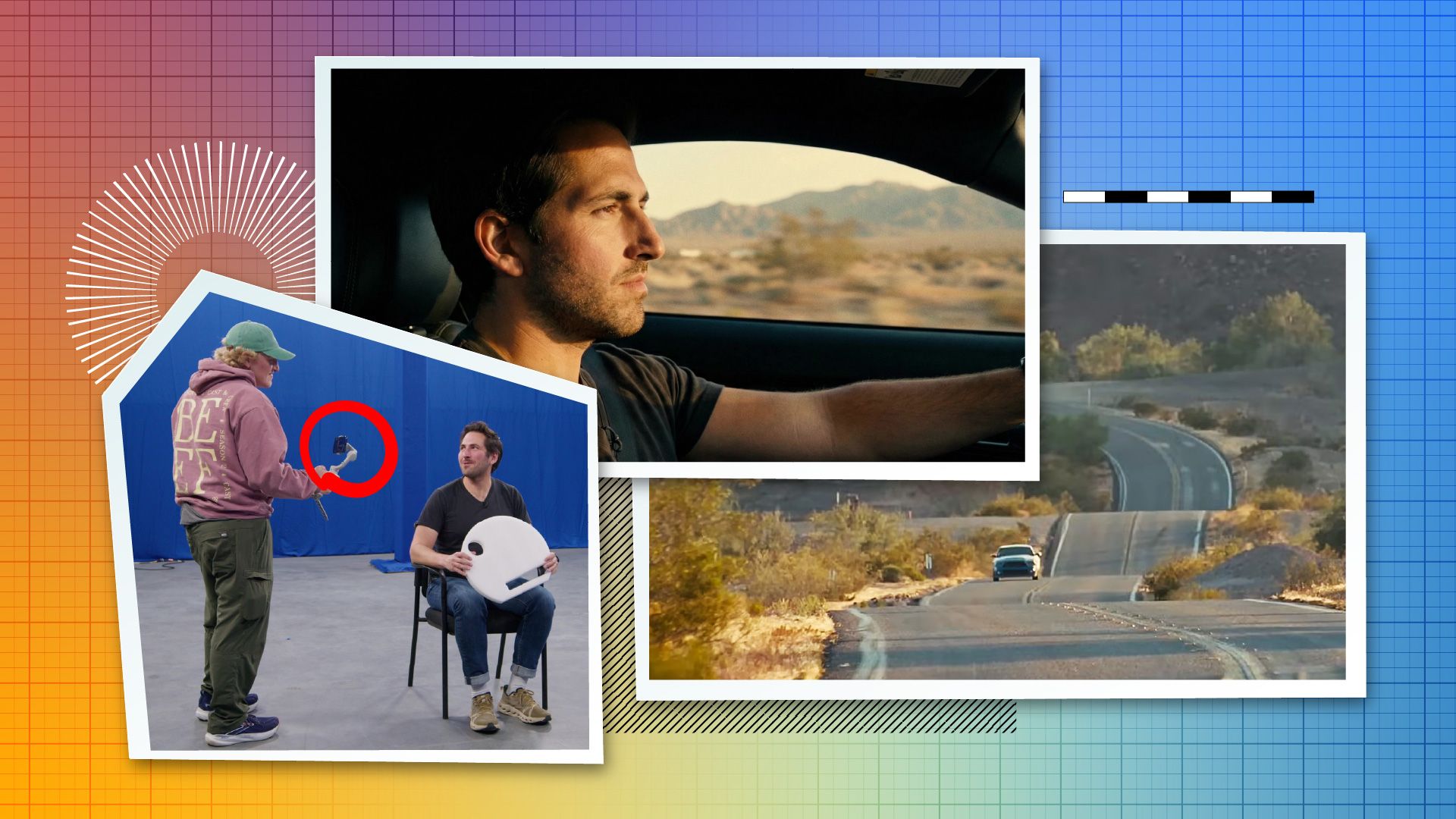

The shoot broke into two parts. For the exterior plates, we drove out to the River of Hills near the California-Arizona border and shot 360-degree reference footage with an Insta360 on the roof, plus driving plates on an iPhone running the Blackmagic Camera app on a Ronin gimbal.

For the interior car shots, we tested two approaches. The first was a poor man's process — shooting inside a real car with blue screen outside the windows, using Lightcraft Jetset for camera tracking. The second was more ambitious: remove the car entirely, scan a Mustang using an XGrid LiDAR scanner and the Elixo Go app to create a photorealistic 3D model, then shoot the actor in a flat evenly lit space with no car present. We've covered XGrid's LiDAR workflow and Leica Geosystems for film previously — this shoot was a direct application of that. Lightcraft Jetset let Conrad frame the shots with a virtual car overlaid in the app.

The lighting philosophy for the no-car shoot: give the AI tools maximum information with flat, motivated base lighting, then push the look in post. Beeble SwitchLight would handle relighting. Alden Peters would composite in Nuke.

Speed vs. Control: The Trade-Offs with AI workflows

The iPhone footage couldn't be pushed far enough. The dynamic range ceiling meant that when we tried to restyle the footage to mimic harsh sunlight blasting through a car window, the result was what we're calling "yassified" — over-processed and unnatural. No amount of Nuke work fixed it.

We also tested Kling O1 and Runway's video-to-video models as an alternative path. Fast — minutes instead of days — but no control. The face warped. Nothing was consistent.

That's the core tension we kept running into: the original pipeline gave us full control but required time and expertise. AI video-to-video was fast but gave us almost nothing to steer. We started calling it the speed-versus-control continuum.

What Changed: Beeble SwitchX

A few months after the original shoot, Conrad called. Beeble released SwitchX — a video-to-video model built specifically for relighting and restyling, with AI roto built in. We restyled the first frames with Nano Banana Pro and ran everything through SwitchX.

The result was the closest we'd gotten. Shadows landing correctly. The rearview mirror reflecting the environment. Not perfect — we still didn't have full control over the background — but it was the first time the speed-versus-control tradeoff started to collapse in a meaningful way.

This experiment was about pushing accessible gear to its limit and mapping where hybrid AI filmmaking actually stands today — what the tools can do, where they fall apart, and what the next version of this workflow looks like.

What We'd Do Differently

Pantomime is the enemy. If the actor touches something, it needs to be real — a real steering wheel, a real seat. Empty space reads as empty space.

iPhone dynamic range is the ceiling. There's no AI fix for information that wasn't captured. If the footage can't hold highlights and shadows simultaneously, the relighting tools have nothing to work with.

Motivated base lighting isn't optional. Flat and even gives AI tools the most information, but the lighting direction needs to be right from the start or you're fighting it through every step of post.

Links & Resources

Tools Used:

Lightcraft Jetset — we previously covered Lightcraft Jetset's major update

Nano Banana Pro (Google Gemini image generation)

VP Land LiDAR Coverage:

People & Companies:

VP Land Previous Coverage: